Multimodal Sentiment & Emotion Analysis (DFG)

Technology(s) Used: PyTorch, LSTMs, Dynamic Fusion Graphs (DFG), GloVe, OpenFace, COVAREP

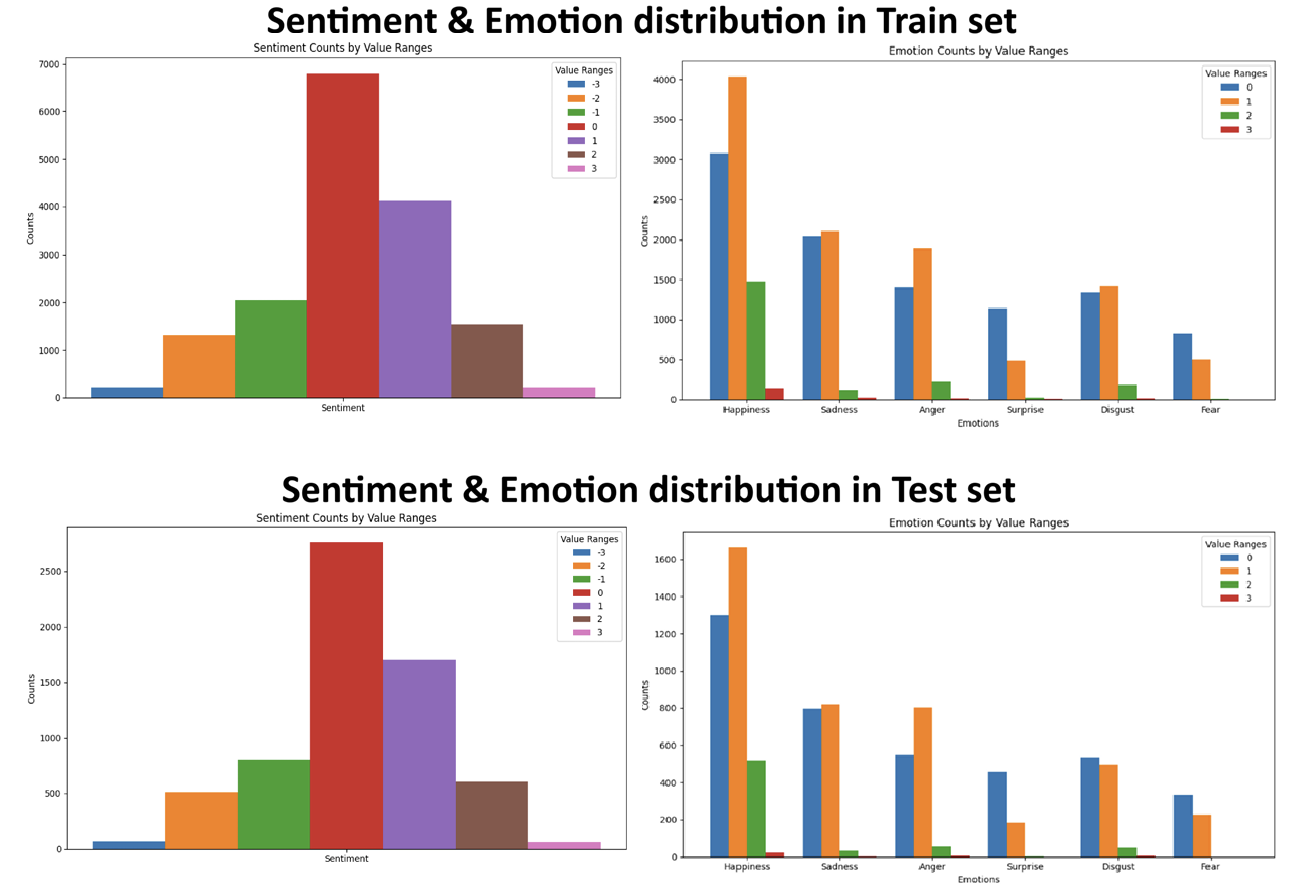

- Core Objective: Developed a deep learning system to predict sentiment and Ekman emotions by fusing text, audio, and visual features from 60GB+ of aligned CMU-MOSEI data.

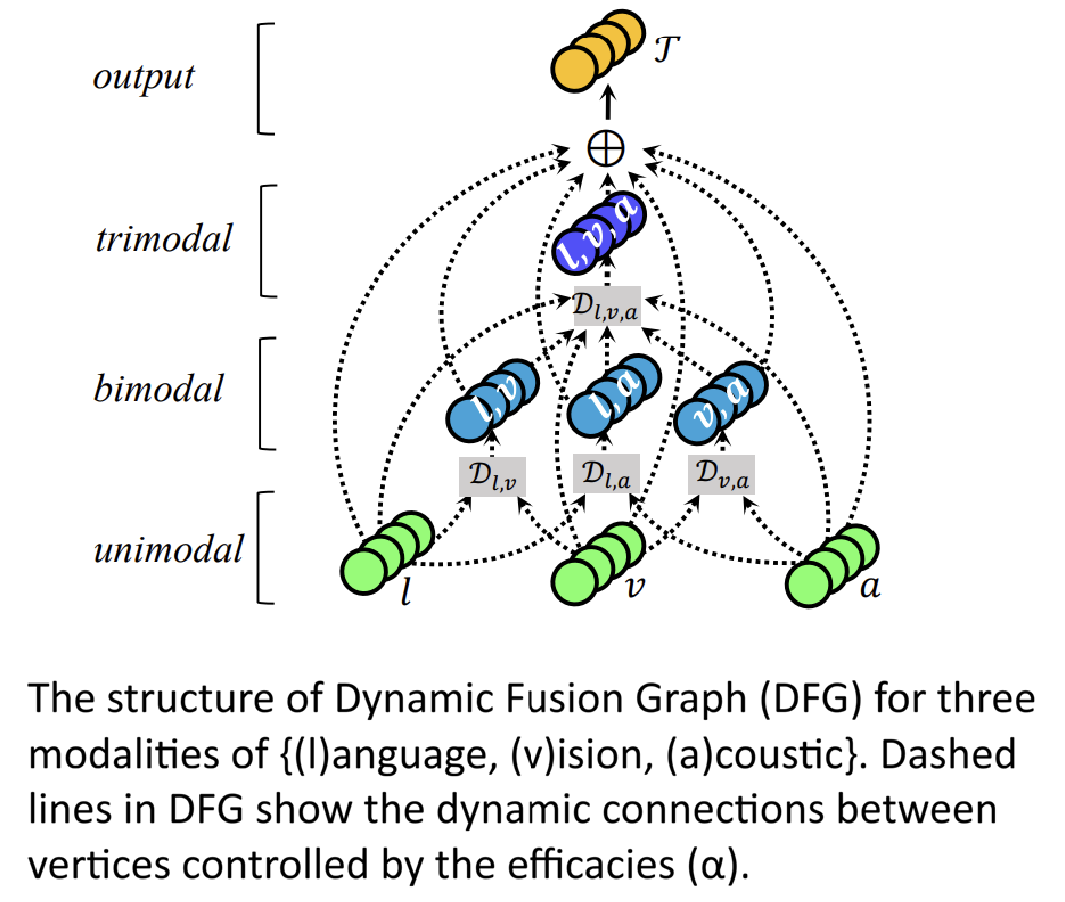

- Architecture Innovation: Implemented a Dynamic Fusion Graph (DFG) featuring modality-specific LSTMs and a multi-view gated memory network to learn efficacy scores for cross-modal interactions.

- Technical Implementation: Optimized with AdamW and SmoothL1 loss. Employed orthogonal initialization and layer normalization to stabilize the trimodal fusion training process.

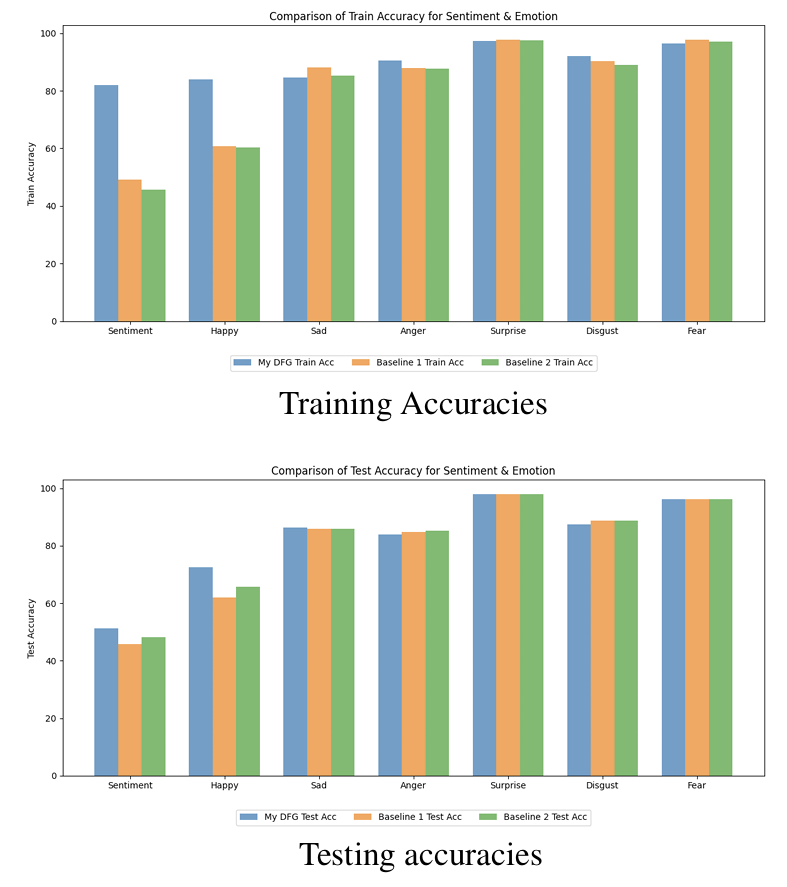

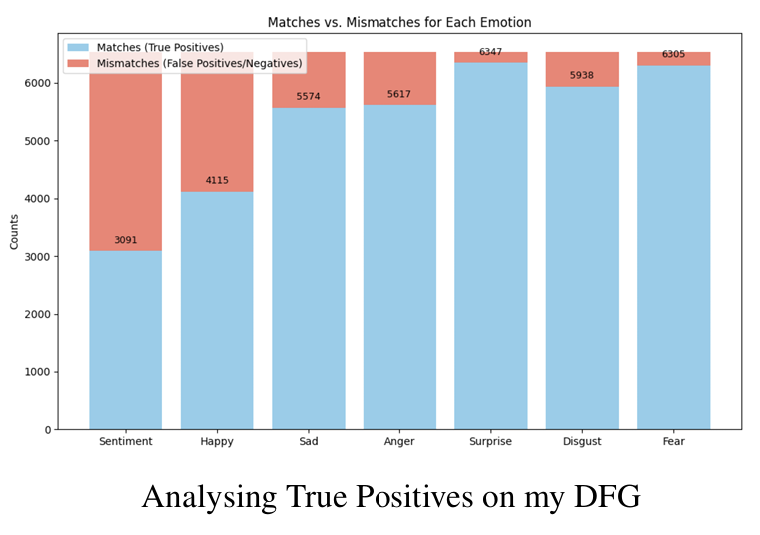

- Results: Achieved 80.8% test accuracy (F1: 79.0), outperforming unimodal and bimodal baselines in 5 out of 6 emotion categories.